January 19, 2024, by Laura Nicholson

Arts Faculty Takeover: The Language Learning Rollercoaster: Weekly Tests to the Rescue!

Throughout the 2023–4 academic year, we are running a new feature on the Learning Technology (LT) blog: a faculty takeover month! Each month, we will feature posts from different faculty members at the university. Every Friday, posts will highlight interesting work and ideas related to technology in teaching and learning and showcase unique projects from within the various disciplines across the UoN. In October, we had our Faculty of Science takeover; in November, it was Medicine and Health Sciences; and this month we hear from the Faculty of Arts.

Strategies for Success

Navigating Language Learning Rollercoaster: Weekly Tests to the Rescue!

By S. Clark, assistant professor, Russian and Slavonic Studies

Helping first-year students find a balance… Let’s face it, between the epic socialising the university scene offers and the somewhat less thrilling but oh-so-necessary studying, it can feel like juggling flaming torches while riding a unicycle. Fun, right?

Now, here’s the plot twist: the notorious cramming sessions before the big end-of-term test. Sounds familiar? But guess what? That last-minute panic dance may not be the best strategy. According to the academic gurus (Seabrook et al., 2005), relying on cramming might leave you with more holes in your knowledge than a chunk of Swiss cheese.

But fear not, there’s a way to turn this linguistic rollercoaster (or any other subject we could assume) into a ride our students will actually enjoy. Picture this: weekly practice tests sprinkled throughout the semester—mini challenges to keep their language skills on their toes.

Why?

Research suggests that the way we choose to tackle our studies can make or break the learning game. So let us break it down! Don’t just allow your students to slam their brains with the module information; instead, create a roadmap – a well-structured weekly plan that turns their learning into bite-sized, manageable chunks.

Why? Learning a new language is like building a house. You don’t start with the roof; you lay a solid foundation. And those weekly tests? They’re like the bricks that make your language skills stand tall and proud.

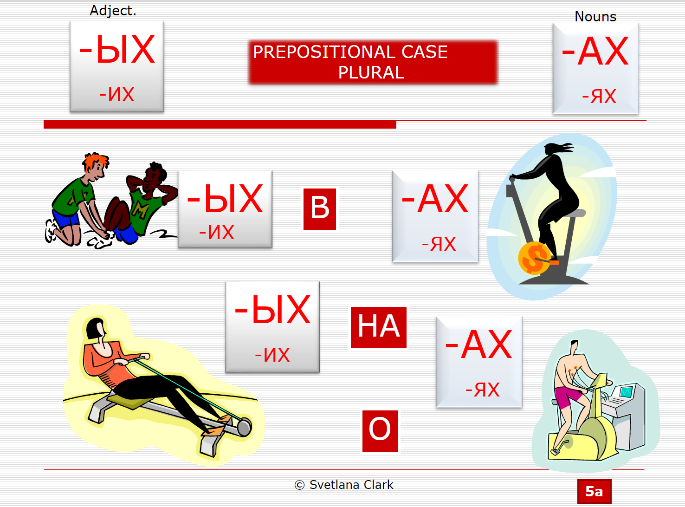

And those weekly tests are helping to flex those memory muscles. It’s like going to the linguistic gym. We even have some gym-related sounds on our lecture slides explaining some case endings! (See Fig. 1 below; the Cyrillic endings are read like ‘Ykh-Akh!).

Fig. 1 – Prepositional case endings slide

Plus, immediate feedback means you can fine-tune your language game as you go.

All those weekly self-assessment challenges are our students’ training ground for the big summative test, as well as bringing huge benefits for building students’ knowledge acquisition and motivation.

This is nothing unexpected; research in pedagogy suggests that the decisions the learners make about how they study can impact their learning and that breaking learning into manageable chunks, with a well-structured weekly plan, can significantly enhance language acquisition. (Dunlosky et al., 2013; Thompson, 2017).

Module overview

This year’s sample consisted of 10 students on a Beginners’ Russian Module (1st year). This 10-week module took place in Autumn 2023–24, and each week had a 2-hour lecture-workshop, 3 separate grammar workshops, and 1 oral class. The module started with the learning of the Cyrillic alphabet and progressed to developing some reading, writing, and speaking skills. All these were accompanied by the introduction and application of quite challenging Russian grammar (genders, plurals, past and present tenses, three (out of 6) grammatical cases, spelling rules, etc.). The Autumn summative test was taking place in-class during the last (10th) week of the Autumn term.

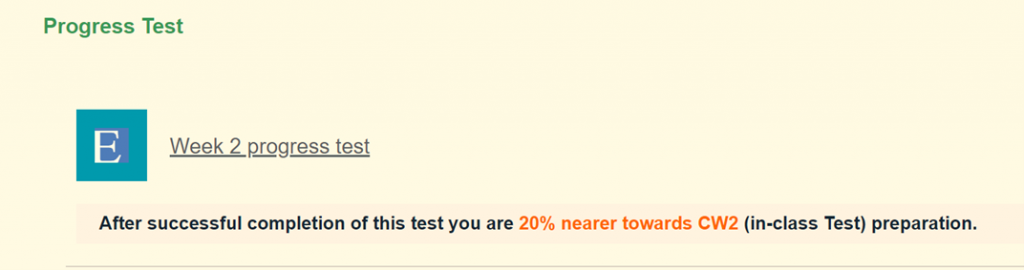

Each week, students had the opportunity to complete their practice test, created on the ExamSys platform and displayed on Moodle (see example Fig. 2 below). Adding an encouraging ‘count-down’ to the test helped to keep students motivated.

The practice tests were created by the module teacher (myself) with the invaluable support of Sally Hanford, our Faculty Learning Technology Consultant. The tests are based on a material chunking principle. Each weekly test had, on average, 10 questions or tasks – 100 marks (compared to 37 questions – 417 marks in the final summative test). The weekly practice tests were based on that particular week’s material, checking the understanding and application of the theory. Despite being teacher-prepared, we can call these tests self-assessment tests, as students had instant access to the test results displayed by ExamSys. This allowed them instantaneously, without delay or loss of interest, to see their strong and weak areas and to use their own initiative either to improve results (after revising the material) or leave it at that level.

The ExamSys self-testing tasks provide students with opportunities to apply and assess their understanding using various learning taxonomies, offering such diverse questions as declarative-based questions, multiple-choice questions, short answers, and problem sets.

Benefits

The benefits of these tests are many-fold. Among them are:

- Giving learners the opportunity to assess their understanding by doing practice problems without any fear of external appraisal.

- Providing learners with examples of effective learning strategies and encouraging good study practices, etc.

Fig. 2 – Example of a test access on Moodle:

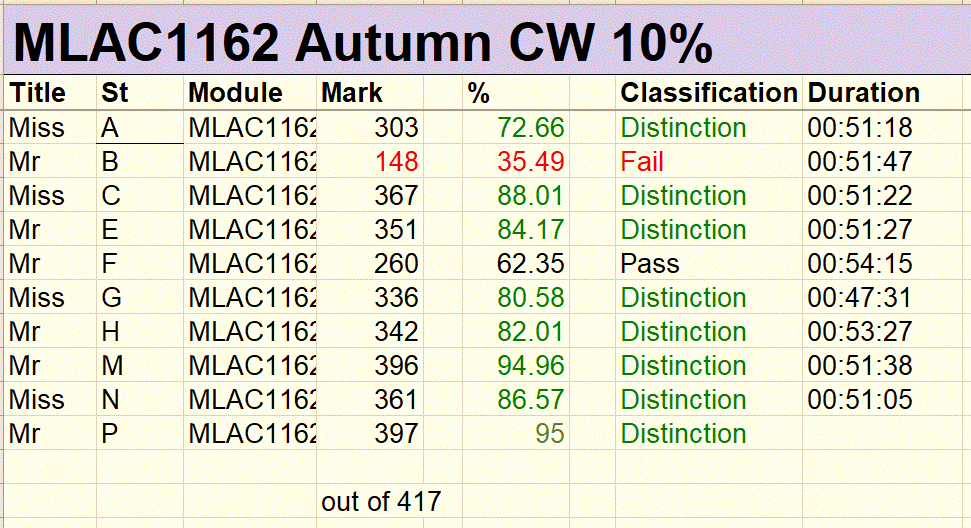

The final summative test had 37 questions (417 marks), covered all the topics of the Autumn term, and had to be completed during the normal lesson within 50 minutes. The marks of the test served as our outcome result; see below with the names removed (see Fig. 3). All 10 students took the test on the day.

Fig. 3 – Summative In-Class End-of-Term Test Results:

The results in the table are based on ten anonymized students. Eight students achieved marks between 300 and 400, which were classified as distinction grades. One student scored 260, which was classified as a pass, and another achieved 148, which was a fail. The total number of marks possible was 417. For all students, the time spent on the test ranged from 47 minutes to just over 54 minutes.

Results

As seen from the table above, the results are excellent, with 80% of the students achieving 1st class marks.

One of the students (mark 62.35) had no access to Moodle for the last 3 weeks. Unfortunately, this is something we were not aware of, as he didn’t ask for help at the time. I have a firm conviction that without that 3-week gap in his self-testing, he would have achieved a much higher test mark.

Unfortunately, we had one fail mark. This is something that was expected with the student, who was not engaging with his studies (unfortunately, this is not isolated to just our module).

Student feedback

The students’ feedback on practice tests was very positive. Below are some of the responses:

- It was really useful, as it gave me an easy way of checking my knowledge. It also kept me accountable and made me continually revise rather than cram just before the test.

- I thought the progress tests were a really useful tool for testing and revising my understanding of the language; they certainly made me feel more prepared for the test.

- Really helpful – helped me to prepare for the final test and find the gaps in my knowledge which I can build on. Overall, they were really useful, not just for test preparation but to revise each week and help me remember what we had learnt in lessons.

Summative assessment results and students’ feedback reassures us that the incorporation of weekly practice tests serves as an effective strategy, especially when compared to more passive forms of learning, such as rereading or highlighting material. It promotes structured learning, provides immediate feedback, builds confidence, and aligns with the scientifically proven benefits of regular testing. By adopting this innovative approach, educators can empower students to not only learn a new material but also to develop a strong foundation for a lifelong linguistic journey.

Author: S. Clark

Bibliography

Dunlosky J, Rawson KA, Marsh EJ, et al. (2013) Improving students’ learning with effective learning techniques: Promising directions from cognitive and educational psychology. Psychological Science in the Public Interest 14(1): 4–58.

Seabrook R, Brown GDA, Solity JE (2005) Distributed and massed practice: From laboratory to classroom. Applied Cognitive Psychology 19(1): 107–22.

Thompson P (2017) Communication technology use and study skills. Active Learning in Higher Education 18(3): 257–70.

Calling all blog volunteers!

Would you like to promote how technology is being used in your faculty? Maybe you have some students who are also keen to share how technology has enhanced their learning experiences? If you are interested in submitting a blog post about your use of technology for teaching and learning, please do get in touch. Find out how to submit a post, or arrange to have a chat about ideas

No comments yet, fill out a comment to be the first

Leave a Reply