May 25, 2014, by Brigitte Nerlich

Science is not what you want it to be

This is a GUEST POST by PHILIP MORIARTY

This is a GUEST POST by PHILIP MORIARTY

The debates sparked by Circling the Square continue “below the line” of a number of insightful blog posts. (And mine). [And mine, Brigitte]

This level of engagement between natural scientists and sociologists is great to see and, given the momentum we established last week, it would be a great shame if the Circling… conference did not become an annual event. The Making Science Public blog will, I’m sure, keep you posted on this. (I think we must have done something right when a physics PhD student saw fit to tweet the message below…

Leaving aside the perennial question of whether or not an aptitude for impenetrable writing is worn as a badge of honour in some areas of sociology — see here; and here; and, for examples spanning the truly awful to the actually rather quite good, here – the aspect of the conference that really exercised me was the vexed question of the independence and objectivity of scientific research. I was perhaps being somewhat mischievous with the choice of title for my post on the Circling… conference for physicsfocus: “The laws of physics are undemocratic”.

But not that mischievous.

I’ve been informed by e-mail that I entirely missed the point and that, of course, no-one had ever said that all scientific evidence is tainted by investigator bias, or that sociologists in general have any beef with the idea of scientific objectivity. (This line, from the description of an influential book by Sheila Jasanoff, would suggest otherwise: “… who should define what counts as good science when all scientific claims incorporate social factors and are subject to negotiation?”). Nestling beside those messages in my inbox are e-mails whose authors are equally convinced that, of course, we’re all subject to cognitive biases so how could we ever have truly objective data? And over at Occam’s Typewriter, Athene Donald is of the opinion that I am “verging on the simplistic” in my belief “that science is inherently neutral”.

Put simply, if the scientific process is not inherently neutral then we’re not doing it right. I hate to succumb to the usual temptation to quote Feynman, but the following words are so apposite to this debate, I can’t resist. (Sorry, Athene!).

“If you’re doing an experiment, you should report everything that you think might make it invalid – not only what you think is right about it…The first principle is that you must not fool yourself – and you are the easiest person s my to fool…After you’ve not fooled yourself, it’s easy not to fool [others],”

I stress yet again that I am not referring to how scientific data will be used by politicians or reported by the media. Nor am I suggesting that all science is free of observer bias. (Indeed, I’m embroiled in a debate at the moment about a series of high profile papers which are based on a worrying mix of experimental artefacts and strong researcher bias.)

But Rule #1 of Science 101 is that we must aim to hunt out sources of random and systematic error and reduce them as much as we can. We drive undergraduate physicists to distraction in their laboratory sessions because of this focus on experimental uncertainties. We then decimate – sometimes even literally – their marks for a lab. write-up if they don’t include error analyses. (I’ve often paraphrased Pauli – somewhat out of context — in 1st year lab. lectures I’ve given, stating that to report a result without its associated uncertainty is “not even wrong”.)

Science must always strive to be neutral in its methods and its data interpretation. This is a very simple, if perhaps not simplistic, message. The remarkable success of science in explaining so much of the natural world via reproducible experiments coupled to mathematical models, simulations, and theory shows that scientific neutrality can indeed be attained, and is not just the idealisation which some suggest it is.

“Regulatory science” is not science.

It was very clear from the Circling the Square conference that what a practicing scientist understands by the term “science” is very often not that closely related to how “science” is viewed by those in sociology. This is the crux of the debate and there are misconceptions and preconceptions on both sides.

A colleague in Sociology and Social Policy here in Nottingham, Sujatha Raman, brought this important document to my attention yesterday. (Thanks, Sujatha!). Although I’m familiar with the concepts of post-academic and so-called “Mode-2” science, the “regulatory science” to which Jasanoff refers in that document is something with which I’m not particular familiar.

So I spent a little time genning up on the topic of regulatory science.

And I got more and more frustrated.

I realise that I am yet again picking at old “science wars” wounds (c.f. last week’s comments on the Sokal affair) but those wounds really need to be re-opened. (I agree entirely with Reiner Grundmann, Chair of the Science and Technology Studies Strategy Group here at Nottingham, that frank discussion is required).

Let’s make the first incision…

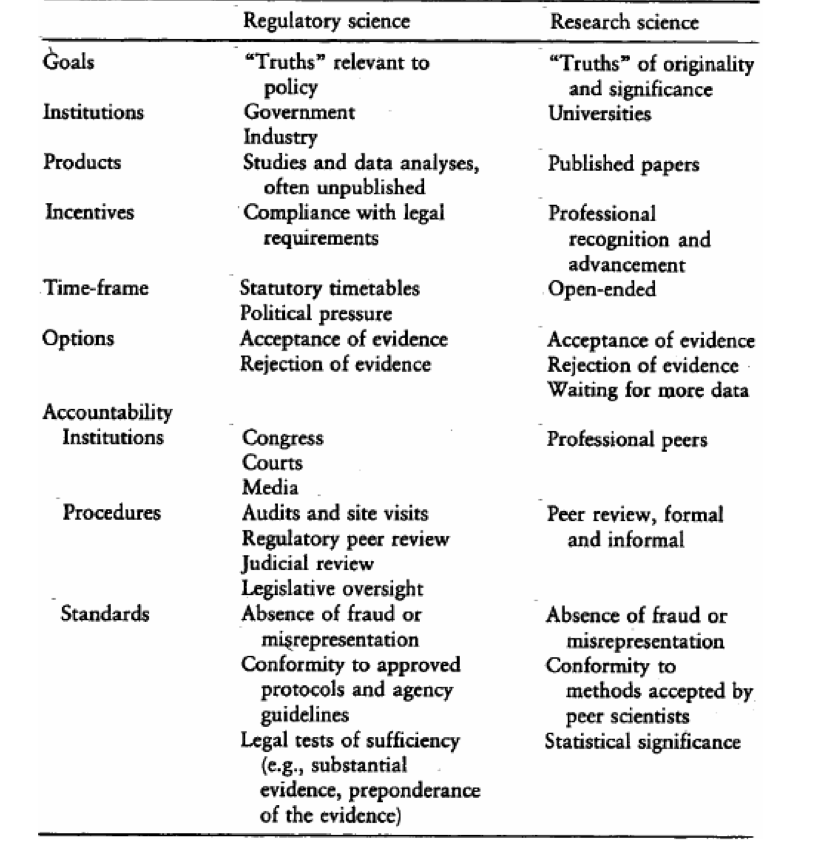

“Regulatory science” is an oxymoron. The differences between “regular science” (i.e. science) and regulatory ‘science’ are spelt out in the table below (from Sheila Jasanoff’s “The Fifth Branch: Science Advisors as Policymakers” book.). Regulatory ‘science’ is a damn good recipe for introducing deliberate bias, for diluting the quality of scientific evidence, and for reducing public trust in the independence and reliability of scientific conclusions.

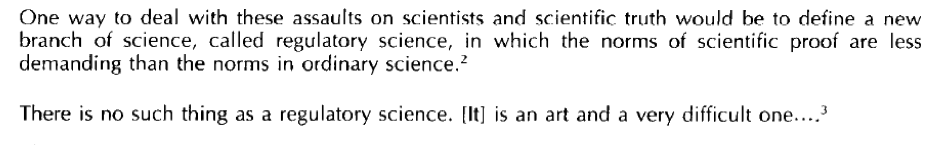

I’m certainly not going out on a limb in stating this. Irwin and co-authors start off their 1997 discussion of regulatory science with the following excerpts from earlier papers:

It may well be that when sociologists speak about “science” that they sometimes have this type of regulatory ‘science’ in mind. If so, very many scientists and sociologists are going to be speaking past each other until we agree some common ground.

It may well be that when sociologists speak about “science” that they sometimes have this type of regulatory ‘science’ in mind. If so, very many scientists and sociologists are going to be speaking past each other until we agree some common ground.

For scientific evidence to be credible and trustworthy, the scientific process has to be as independent and disinterested as possible. Regulatory science — or, indeed, any of the other “next generation” forms of science including post-academic, Mode-2, etc. — wilfully erodes this independence and disinterestedness.

The parallels between regulatory ‘science’ and Research Councils UK’s advice on impact are also rather striking. But that’s a blog post for another day…

Coda: Reiner pointed me towards a fascinating blog post and associated comments thread on the matter of the extent to which physical laws are fundamental and objective.

I think that any physicist reading Cartwright’s comments quoted in that post will grind their teeth quite a bit and may, if so inclined, mutter some rather choice language under their breath. (I should stress that Reiner is at pains in the comments thread to state that he is just quoting Cartwright, not supporting her arguments).

Newton’s second law most definitely does apply to the situation which Cartwright describes, contrary to her belief. Cartwright’s straw-man argument is flawed to its core (particularly the idea of physics being a “fundamentalist faith”). The issue with the falling banknote is not that Newton’s second law fails, it’s that one has to apply it correctly! There are a number of forces acting on the banknote and these must all be taken into account.

Predicting the motion of an object on the basis of a combination of forces is, of course, not always straight-forward (and can often be intractable) but that’s not because Newton’s second law is not valid! I thoroughly recommend James Gleick’s book Chaos for a thorough grounding in how simple laws of motion can produce complex behaviour.

One doesn’t even have to consider a system as complicated as the banknote fluttering to the ground to see how exceptionally intricate behaviour can originate from a simple equation which has Newton’s second law at its core:

(Jump to ~ 18:20 if you want to cut to the chase!)

[…] this post, Philip Moriarty sent me his take on the science politics issue, which you can read here – there are certainly some overlaps between what I'll say below and what he says in his […]

[…] on Sunday morning (25 May) both Brigitte and Phil mused again about the issue of science and politics and, hold the front page, largely agreed with […]

Wouldn’t it be the most straight forward assumption that sociologists are thinking of their own work? That is the part of the world they have direct access to.

That is a sentiment quite common among climate sceptics. They would like to negotiate, like you do in politics. If science says gravity exists and I think there isn’t let’s meet half way and agree that the gravity constant g=5 m s^2. I science says that the climate sensitivity is between 1.5 and 5, let’s agree on 1.5. It is one of the clearest examples of thinking science is just politics.

I wondered how long it would be before someone misrepresented climate sceptics with the false analogy of gravity.

Paul Matthews, if you read carefully, you will see that I did not claim that climate sceptics made statements about gravity. Would also make no sense.

I referred to gravity as a neutral example of the mechanism I regularly see in comments at WUWT and Co. Sometimes it helps to use an example that is less tainted by politics to see a fallacy more clearly.

No Victor. By using examples of gravity and ECS to illustrate your point about negotiation, you are directly implying that negotiating the best point estimate of gravitational acceleration is equivalent to negotiating the value of ECS. There is absolutely no way you equate those two physical parameters. Or rather, you can if you want, but don’t expect anybody else with any sense to accept it.

Jim Bouldin, you are also a scientist, right? Wouldn’t you agree that scientists should arrive at better estimates for the climate sensitivity by exchanging arguments in the scientific literature? Rather than negotiating a nice value in political talks in Washington? Call me surprised, or rather, I probably do not understand your comment.

That’s a red herring Victor. Paul’s point was that you were implicity equating the confidence that scientists have in g, with the confidence they have in ECS. And I agree with him, you were. And this is absolutely a wrong thing to do, it’s ludicrous.

Jim Bouldin, I am glad we agree on where science should be discussed. Naturally we have more confidence in the value of g as in the ECS. Just look at the error bars and the complexity of the climate system. It sounds rather amazing to me to assume that I or any reader would think otherwise.

I will leave it up to the reader to judge whether equal confidence was implied or only an outside non-political example.

Any interested STS researchers can naturally use my public comments and have my consent to use my name.

No, Victor did not imply that confidence in the two values is equivalent and it’s not necessary for that to be the case for his point to stand.

Victor

Your simplistic and false analogies illustrate the very problem conferences such as Circling the Square try to address. You’ve spent much of your professional life steeping in the cut-and-dried world of physics, carry little knowledge of your critics, and use the dull thump of boring falsehoods to suppress opponents. The influence of politics and science on each other is a complex topic. It is incapable of receiving satisfactory address at hands such as yours whose thinking fails to extend beyond “Physics=>my opponents are wrong”.

Hi

I have let this comment through. However, I would urge commenters to stick to constructive comments and critique and not engage in what looks more like personal attacks.

Victor listen to yourself for a second.

The acceleration due to gravity is a universal property of matter, a fundamental aspect of nature as we know it, and has been measured, repeatedly and by different methods to a very high degree of precision, which to a single dec. place is ~9.8 m/sec/sec.

ECS most likely somewhere between 1 and 4 degrees C, or if I use your numbers, 1.5 and 5.

Now tell me with a straight face that the confidence in those two values is similar.

And by the way, when Charney et al first came up with 3.0 as a point estimate in 1979, that was essentially a “negotiated” value in the sense that they had no basis for choosing which value was most likely between 1.5 and 4.5, so they chose the midpoint.

My apologies, if my professional skills allow me to see whether something is science with more clarity than others and to state so with confidence.

If my “opponents” would not claim falsehoods with strong confidence they would not be my “opponents”.

The relationship between science and politics is indeed complex. I have always wondered why the “sceptics” do not make political arguments or point to the parts of the science (impacts, extremes) that are actually not yet solid. That sounds like a better long term strategy as sacrificing your reputation by denying that CO2 emissions can heat the Earth’s surface or similar completely wrong claims from the lists of Roy Spencer or Skeptical Science. That almost gives the impression that the “sceptics” do not believe themselves that the population would buy their political arguments.

I do not see it as unrealistic of Brigitte Nerlich to expect that people who disagree about something engage in polite conversation and try to understand the reason for their differences and what should be investigated to resolve the disagreement. That is how we do it in science if it works well. I am not sure whether this model can be transferred to politics, where there are differences of interest that also require negotiation. Still also in politics ideally people would stay polite and in the better functioning democracies they do.

What causes the mysterious action at a distance which pulls bodies towards each other? Newton famously said “I frame no hypotheses”. Attributing it to the curvature of space-time around massive bodies doesn’t solve the riddle, it only changes it to

‘What causes the mysterious action at a distance that pulls space-time towards massive bodies’?

Similarly in climate science: ‘What causes the observed cyclic variation in various climate metrics’

I tentatively point out that all systems are comprised of cybernetic feedback loops, and all feedback loops exhibit oscillations about means.

The solar syste is a good example of this, with it’s librations and variations similarly oscillating about means at various timescales.

What I find curious, Roger, is that people are still taken seriously when they try to claim that a multi-decadal increase in global surface temperature (along with accelerating rates of ocean acidification and ice melting) can be explained by <1% variation in total solar irradiance – on either shorter or longer timescales. This is particularly curious, given that these observed terrestrial changes are entirely consistent with a cumulative radiative energy imbalance caused by a 40% increase in CO2 ppm over 200 years (unprecedented in at least the last 800k years). As Stephen H. Schneider once famously remarked: "If you deny a clear preponderance of evidence, you have crossed the line from legitimate skeptic to ideological denier."

For the record, the simple facts of the matter are as follows: Cyclical solar activity, on any timescale, cannot explain the multi-decadal warming of the last 150 years. However, these solar cycles (along with other natural factors such as the cooling effect of industrial pollution) do explain why the warming that has occurred – and is occurring – has not been uniform.

http://lackofenvironment.wordpress.com/2012/09/03/uncomfortably-numb-is-no-good/

Brigette

There are people in climate blogs with pre-determined notions, and their critics. Your expectations are that these people somehow gather around certain venues where they miraculously shed their mutual antagonisms and start co-existing.

Skeptical commenters deserve just as much protection as supporters of the orthodoxy do. I am more than happy to make my point and move on.

I would hope that co-existing would be possible. Surely you do not want to eradicate every climate scientist that disagrees with you?

Mr Venema, I have dual post-graduate degrees and a fellowship in medicine. I see crude analogies just as insulting, if not more, as strong language.

Hi, I don’t expect people to shed their antagonisms; far from it, I know by now that that is very unlikely. However, I expect these antagonisms to be expressed politely.

Wow, this seems to be a comment stream ripe for STS study. An illustration of how an analogy only works if those it is aimed at are willing to actually consider what’s being illustrated. Otherwise, criticise and/or mis-represent the analogy, and/or attack the person making it. I hope that anonymity issues don’t mean that it’s not possible to use these comments for research purposes.

Shall we say that anyone who does not object within a week implicitly agrees with his/her comments being used?

I am pretty sure I won’t use this for a study, tempting as it may be. So people can sleep easily and ethics committees as well.

Not by those who are involved in the thread. Not by those allied to those involved in the thread. Not by those who did not run it by an institutional ethics review.

Just rest assured I won’t do ANYTHING with this!

A least my public comments can also be used by those who are involved in the thread. No problem, I did not say anything I should be ashamed of. Personally, I see no need to for any study analyzing my public comments to go through an institutional ethics review. Anyone should feel free to do so. I have problems with spreading private communications, but maybe that is just me.

Would it be fair to conclude that being a physicist you would have zero experience in this matter and therefore opinions such as the above can be discarded?

While I am indeed a physicist, I think I am mentally capable of deciding what people can do with my public comments. But if your medical judgement is that I can not, maybe we compromise and agree that my opinion on social media studies can be discarded, while your opinion on climate science can be discarded?

I think the problem with this analogy is that ‘gravity’ has been used before in climate debates, albeit slightly differently, and may therefore provoke a strong response. Just a guess! (http://nofrakkingconsensus.com/2013/02/01/is-climate-change-like-gravity/)

Quite possibly, although it’s not one I’ve seen myself. Personally, it seems that dialogue would improve greatly if rather than responding strongly, people tried reading things again. Maybe that’s just me, though.

Yes, that is exactly the problem, and in fact Paul’s comment above relates to a Twitter discussion we had recently about the problem of people equating the strength of evidence of climate change science to that from various other fields of science. I’ve seen many such comparisons, and they seem to be increasing in number. And it’s not hard to see why people make them either: they are trying to convince people that the strength of evidence for climate science is stronger than it really is, via these comparisons. I took specific exception to comparisons with evolution, since I know for a fact that many people have a poor understanding of the depth of evidence behind evolution. They hardly even know the basics, which they remember mainly from the intro bio class they had to take in college, or whatever. But there is absolutelly no comparison between the strength of evidence for the two, and if you said something like that to top level evolutionary biologists, they would laugh you off the stage.

They make these comparisons and then they try to act like they didn’t actually make them, or that’s not what they meant, or whatever. Case in point: Victor Venema right in this very thread. Sorry, that’s a no-go. We’re not that gullible.

For what it is worth, I have stated in the past that the evidence for evolutions is orders of magnitude stronger as for anthropogenic climate change and that it is thus pretty amazing that people are able to disbelieve this. (I hope someone else can find it, I just couldn’t).

In this respect I do not feel that climate scientists are doing something wrong. The main problematic countries are the creationist countries and if biologists are not able to get their message accepted why should climatologists be able to? The solution to this political problem will have to come from society and the only thing I can do as scientist is correcting misunderstandings like Sisyphus.

I’m glad to hear you say that Victor.

I want to clarify also that I’m mainly objecting to people who argue for the equivalencies of climate change science and other major branches of science. This objection doesn’t, of course, necessarily imply anything about the quantity and quality of the evidence for human effects on the climate system, which is a separate question.

These topics require good thought and extended essays to really elucidate well and we have to be careful with brief statements which can be easily misconstrued.

You can tell what people should do with your comments. You cannot do the same with others’ comments, can you? My judgement on what can and cannot be done with public comments comes not from my medical background but from knowledge of the literature on ethical issues surrounding research on online material.

Please disregard my opinions in climate science, of which I offer precious little. My opinions on STS studies and psychology literature in climate are well-grounded and again, are based on knowledge of the literature in these areas. So, you would now understand the basis for rejecting your conclusions of STS as pertaining to climate change from solely an understanding of the physics of the climate.

I wonder why the law of gravity is always wheeled out as the example for the objectivity etc of science. Humans have observed falling bodies before Newton formulated the law. What the law does is providing a description of an observed regularity. It does so in a form which allows prediction (within limits). There are other instances where we do the same (not only in physics): observe regularity, try to find a mechanism, model, formula, or theory which describes it. Use this for prediction. Not all science, all of the time, proceeds like this. Perhaps these are little islands of order in the sea of chaos.

Those who demonstrate a falling object in order to demonstrate the validity of the law of gravity should ask themselves what this is supposed to show. That the object falls because of a formula? We don’t need a formula to understand gravity, we experience it every day. That the object falls at a speed that can be calculated? Then we have good model of what is going on. Not more, not less.

I’ll bite. Could it be that people simply use gravity as a simple example that everyone knows?

The law of gravity is much more than just falling objects. With it and calculus also invented by Newton, you can show that a spherical mass can be treated as a point mass. More importantly, it connect the falling object on Earth with celestial movements. An enormous feat, especially for the time.

That’s *exactly* it, Victor. It’s usually the simplest demo we can demonstrate with the “stuff” to hand.

I am guilty of picking up a tin can that was close to hand during one of the “Circling…” sessions and making the point about gravity to which Reiner alludes.

But if I’d have had a laser pointer with me then I could have explained how Maxwell’s equations, coupled with the universal laws of statistical mechanics and quantum physics, with solid state physics thrown in on top, all work together in concert to provide that wonderful beam of radiation. And that nothing about how all of that incredibly complicated science works is culturally/societally determined.

It’s the following type of assertion which motivated the gravity demo: “… who should define what counts as good science when all scientific claims incorporate social factors and are subject to negotiation?” [See post above for source].

The use of “all” in that sentence is the issue.

If it is indeed impossible in some fields to reach robust conclusions based on reproducible, bias-free measurements and experiments then maybe we should stop labelling that area of research as science?

I can understand your problem with that sentence as it could be interpreted in a way that science is ‘made up’ or ‘invented’. STS, and many historians of science, suggest that science is a social activity. A new understanding of the world, or a new theory is not made through a publication but through the uptake by other scientists and wider society. Therefore, one cannot study the emergence of scientific claims through a model where a sole inquirer faces objects (nature or society) and arrives at true knowledge which is then tested and verified. The whole process is social and therefore you see the concept of negotiation on Jasanoff’s book cover.

One of the origins of STS, the Edinburgh ‘strong programme’ started by applying scientific principles to the study of scientific knowledge. Here are their famous four elements as bullet points:

Causality: Scientific knowledge is caused by physical, psychological and social factors;

Impartiality: Sociologists cannot judge scientific knowledge, only explain it;

Symmetry: Treat true and false knowledge claims in the same way. Both are socially caused. This view is different to ‘debunking’

Reflexivity: Same principles apply to the sociological research.

This was intended to provide an empirical analysis of science as it is practiced, in contrast to accounts that relied on scientists’ own accounts (or hagiographies).

Of course, there is an element of this, but what you seem to over-looking is that the reason other scientists accept the theory is because it has been tested and confirmed. If someone finds evidence against the theory, scientists don’t negotiate to decide whether to accept the original theory, an updated theory, or something in-between. It seems to me that you’re taking the societal aspects associated with individual researchers or groups of researchers, and assuming that you can apply that to the entire scientific process.

First, “And Then There’s Physics” makes a very good point.

Reiner,

It is interesting that after the Mathematical Cultures conference there was a long running discussion of the mathematical equivalent of “gravity”, i.e. 2+2=4. This was initiated by this post

http://www.celebyouth.org/will-smith-and-maths/

my contribution is here (in a general context, the relevant bit is half way down)

http://magic-maths-money.blogspot.co.uk/2014/04/when-being-wrong-is-right.html

but there has been follow ups. Unfortunately the discussion, which centred on Wittgenstein fyi, was via “Reply-all” e-mails.

Tim

Dammit. Sorry for the truncated post. Don’t quite know why that snippet got inadvertently posted.

Here’s the rest…

“Reiner, this really worries me, I must admit. If the stance of a large fraction of the sociology community continues to be that the *entire* scientific process, including the physical and mathematical laws that result from that process, is socially determined, then we have made very little progress indeed since the “science wars” of the mid-nineties.

Yes, of course, scientists operate and interact within a social setting. But there’s a long, long way from this to asserting that physical theories and experimental data are the way they are because of the sociology inherent in the scientific method.

This is why we keep referring to Newton’s laws and gravity. F = d (mv)/dt (i.e. force is the rate of change of momentum, Newton’s 2nd law) is not written that way because scientists decided by committee that this was the best way to write it. It’s written that way because it’s representing a mathematical law at the heart of the physical world which has been confirmed time and time again.

A really neat example in mathematics is the Mandelbrot set. Watch this video . The Mandelbrot set was *discovered* (just like physical laws such as E=hf or Maxwell’s equations or E=mc2 or…). Are you’re going to argue that this remarkable mathematical landscape wasn’t discovered? That it exists, like other discoveries in physics and maths, solely “due to the uptake by other scientists and wider society”?

I very much hope that I am misunderstanding you here and that the argument here isn’t that the laws of physics are “mere social convention”, as Sokal put it.

Philip

are you are appealing to something beyond social interaction, which is ‘out there’? Of course we can come to the conclusion that something is true, or that we believe in it, or that someone has discovered it, or whatever formula you want to describe ‘it’. But would you deny that it is a social process which has led to a state of knowledge about the world that we have today? That this knowledge was different in the past, and that it will be different in the future? Should not be a big deal to acknowledge, unless you want to make the point that there is a reality out there which (one day) can be known in its totality and which reveals itself to us in scientific models and theories. What I am arguing is not the science wars reloaded but common knowledge since Kuhn’s The Structure of Scientific Revolutions.

BTW, mathematics is a great social convention, built on axioms and tautologies. Nevertheless, according to Gödel, paradoxes emerge in such systems which then need creative solutions. I am not sure that you as a physicist need to be committed to an ontological position which claims that the world is mathematical.

What I am arguing is not the science wars reloaded but common knowledge since Kuhn’s The Structure of Scientific Revolutions.

I have no time for Kuhn. His idea of a “paradigm shift” sweeping away the old and heralding a brave new approach to science is just wrong. Relativity did not sweep away Newton’s laws. Newton’s laws fall out of relativity in the appropriate limits. We even have something called the “correspondence principle” in quantum mechanics where, in the appropriate limits , the laws and equations of quantum mechanics reproduce classical physics.

The progress of science is much more nuanced and subtle than Kuhn proposes.

It is also interesting that you say that what you are arguing is “common knowledge”. But it’s just Kuhn’s opinion. Just because Kuhn said it doesn’t make it right!

On the other hand, the laws of physics are “common knowledge” of a very different type. Those laws underpin the way the natural world works. It doesn’t matter to which school of thought one pledges one’s allegiance (e.g. Edinburgh, Frankfurt, Bath, or, indeed, Nottingham) — what wins out is the reproducibility of the evidence and the ability of a theory to explain that evidence. Newton’s laws, Maxwell’s equations, Schrodinger’s equation, etc..etc… are the same the world over.

Sure, one could couch them in different mathematical language. Or one could even remove the mathematics and recast all of the equations in unwieldy prose. It doesn’t matter. Those physical laws are objective in the sense that when the experiment is done correctly it doesn’t matter *who* does the measurements (or whatever particular ‘breed’ of ontology to which they subscribe) — they will reproduce results carried out decades/centuries/millenia ago in an entirely different continent.

[As for Gödel, let’s not throw the baby out with the bathwater. Just because the incompleteness theorem exists, this does not mean that mathematics — and mathematics is the language of physics– has nothing useful to say at all!]

But would you deny that it is a social process which has led to a state of knowledge about the world that we have today? That this knowledge was different in the past, and that it will be different in the future?

But it depends on how you define knowledge. Given that there appears to be some consternation about using gravity in these discussions, let’s choose a couple of different examples. Our knowledge that a prism will refract light is no different now than in Newton’s time. Our knowledge that the voltage across a resistor is the product of the current through that resistor times the resistance is no different than when Ohm discovered this very simple law nearly two hundred years ago.

In what sense do I mean that our knowledge is no different? Well, we can repeat these experiments/ask the same questions and we’ll get the same results as were found centuries ago (e.g. through what angle is red light refracted?; what is the voltage across a 10 Ohm resistor when it has a current of 1 amp flowing through it?)

What *has* changed in the intervening centuries is the depth of our understanding behind the origin of these effects. In both cases, quantum mechanics (QM) underpins the fundamental physical processes. *But that doesn’t mean* that Newton’s experiments on light or Ohm’s (viciously-attacked at the time) results on electrical conduction are now worthless. Those results still stand and, indeed, can be reproduced by primary school children across the world (who not only don’t need to understand QM to draw valid conclusions, they have absolutely no knowledge of the existence of QM!)

Quite why Kuhn was so influential, I really don’t know. The Structure of Scientific Revolutions was published in 1962, long after the appearance of quantum mechanics. Kuhn did his PhD in physics. He must have known about the correspondence principle. His entirely flawed idea of incommensurability, it seems to me, can be dismissed immediately simply by pointing to the correspondence principle!

But would you deny that it is a social process which has led to a state of knowledge about the world that we have today?

Peer review and social interactions of course underpin how the state of scientific knowledge evolves. But this is very different to stating that all knowledge derives from social interactions! There’s a neat quote, whose origin I can’t find, which goes something like:

Three months in the lab can save you half a day in the library.

It is not entirely uncommon (to put it mildly) for a scientist (or group of scientists) to work really hard on an experiment; get good, reproducible data with a high signal-to-noise ratio; analyse those data carefully and come up with a credible theory to explain them…

…only to find that the results they’d obtained, and the theory they’d derived, had been published unbeknownst to them decades ago.

Tsk.

“What is the voltage across a 10 Ohm resistor when it has a current of 1 amp flowing through it?”

should, of course, be:

What is the voltage across a 10 ohm resistor when it has a current of 1 amp flowing through it?

Apologies.

Philip

You don’t have to bang on about regularities in nature which have been convincingly established. I have no quarrel whatsoever with that. Open doors!

But:

1. This is textbook science. If you look at science in the making you will see the crucial role of social interactions, questioning the quality of data, proper setup of experiment, qualification of researchers, perhaps even experimenter’s regress (H Collins).

2. Not all science can rely on experiments to ‘verify’ (are you happy to sue this term?) hypotheses.

Regarding Kuhn, I think this would merit a special debate. Let me just ask you one question, as you are so dismissive of his notion of paradigm change in the history of science. Do you think there is scientific progress over the centuries, and we are just building more and more complete (or perfect) knowledge about the world?

Sorry, Reiner, but I’m afraid I do have to “bang on” about those “regularities in Nature” when faced with comments such as the following:

…that this knowledge was different in the past, and that it will be different in the future?

I’ve given you very simple and clear examples of where knowledge – in the sense of the results of an experiment or simple observation – has remained exactly the same today as it was centuries ago.

Not all science can rely on experiments to ‘verify’ (are you happy to sue this term?) hypotheses.

I’ve very, very rarely done an experiment to verify an hypothesis. I do experiments to explore Nature.

And if a research method is not based on measurements which are reproducible and *in principle* bias-free, then should we be calling it science? Victor makes this point elsewhere in the comments thread…

Do you think there is scientific progress over the centuries, and we are just building more and more complete (or perfect) knowledge about the world?

Yes, of course there’s been scientific progress. This was clear in the examples I gave you above re. those “regularities in Nature which have been convincingly established”.

I’m largely in agreement with Philip here. I was interested in whether you (Reiner) could clarify something you said in an earlier comment. You said :

Are you suggesting that the change in our knowledge can be ascribed to societal influences? I would argue that the reason for the change is because of a change in the evidence available. So, one could argue that our knowledge would change with time even in the absence of societal influences (i.e., in an ideal scientific framework in which all those involved are objective and unbiased). Where, then, is the evidence that societal affects are influencing our advancement of knowledge – other than in determining what we may choose to study?

If someone in good faith misunderstands the term gravity, that at least demonstrates that the laws of gravity are politicised in the climate “debate”. I will try to use the speed of light next time.

That was a reply to a comment by Brigitte Nerlich stating that she had misinterpreted a comment of mine because it mentioned gravity. I cannot find both comments anymore somehow. Maybe both deleted?

Sorry, I deleted those because they really were off topic and in the end I thought they would just totally confuse people! What I was musing about was that ‘gravity’ is used by some people as a way of framing the climate debate, just as ‘Frankenstein’ was used for a while to frame the biotech debate… The term is an entry point to a standard script through which to see and, seemingly, understand things…. (John Turney, In Frankenstein’s Footsteps, 1998). So, just as Frankenstein may be used to ‘understand’ biotech, ‘gravity’ may be used to ‘understand’ climate change, while, in both cases, those who use that script for understanding and debate may not really have read the story (Frankenstein, Mary Shelley) or the science of gravity (Newton etc etc.).

Additionally I want to say that this was not meant as a criticism of your comment. You used the word ‘gravity’ to make a valid point. However, this stimulated some discussions which went beyond the point you wanted to make, it seems. So, this made me think that as soon as people see the word ‘gravity’ they might take it as a starting point for framing their own views on issues, in this case either ‘climate change’ or ‘science’, while by-passing the point you wanted to make. This is to say ‘gravity’ has become something like an anchor for a cultural script that is just activated by that word…. As Jim Bouldin says in another context in this thread: “These topics require good thought and extended essays to really elucidate well and we have to be careful with brief statements which can be easily misconstrued.” 🙂

My point is that you want to use a topic are that’s more complex and multivariate, and not dominated by a relatively few and simple cause and effect mechanisms that lead to relatively easy predictability. Ecology, for example, or economics. The speed of light’s little different from gravity in that respect.

My point is that you want to use a topic are that’s more complex and multivariate, and not dominated by a relatively few and simple cause and effect mechanisms that lead to relatively easy predictability. Ecology, for example, or economics. The speed of light’s little different from gravity in that respect.

Let’s also not overstate the difficultly of the problem. The basics are radiative transfer and an increase in relative humidity if the temperature increases. That might be complicated for the normal public, but is easy on a scientific scale. That is the difficulty level of astronomy. Thus the speed of light was not that badly chosen.

It only becomes messy when it comes to feedbacks and that can go both ways. Thus we need to understand that for the uncertainty estimate, but the other feedbacks are not that important for the mean. A large uncertainty does not make the case of the climate “sceptics” better. Because the damages are expected to grow strongly with the temperature, the expected value for the damages actually increases with larger uncertainties.

I agree that we don’t want to overstate (or understate) the difficulty of the problem. I just think it’s a lot more complex than that however. These are the kinds of questions that philosophers and historians of science deal with , and it certainly requires very careful and detached thought, and also a good working understanding of the various disciplines one wishes to compare. Climate science might indeed be simpler than ecology or economics, but I absolutely disagree that large uncertainty should increase skepticism. Indeed, I would argue exactly that skepticism should more or less always scale with the estimated degree of uncertainty.

Correction, that should read “but I absolutely disagree that large uncertainty should *not* increase skepticism”. Note also that I am referring to skepticism generally, i.e. as a philosophical stance, not to any specific climate change “skeptics”.

It is not the uncertainty in itself, I would worry about. I am not more skeptical about a 10-day weather forecast as about a one-day forecast. That does not make the 10-day forecast more subjective, more a social construct or more a subject for STS studies as a 1-day forecast.

If you mean being skeptical about something as seeing this as a high priority topic, then I would argue that quantification, biases and unknowns are most important. A bias could be in the mean, but also in the probability distribution (uncertainty).

For example, when it comes to the climate sensitivity, I do not see it as a scientific problem that the confidence interval is large. That is no reason for skepticism as such. It is a societal problem and a better understanding that makes the confidence interval smaller is desirable in this respect.

It is a scientific problem that the uncertainty in the sensitivity estimated from models is larger than the model ensemble spread. This bias in the uncertainty needs to be quantified. Currently we only have subjective estimates (“expert opinion”) for the additional uncertainty due to unknown or at least not modeled factors. (Fortunately, we have multiple other ways to estimate the sensitivity.)

Philip

Exploring sounds weak for a scientist armed with all these certainties. Don’t you want to explain and predict? Or are you in fact unhappy with the term ‘verify’?

Reserving the term science to those areas where can have reproducible knowledge screens out pretty much of our knowledge base. You only can reproduce if the parameters remain constant, not a scenario that widely obtains.

Regarding the resistance to the term ‘social influences’ just think about what the alternative mode of cognition would be. The individual mind making discoveries? And then somehow making these universal? Surely even in this heroic picture of knowledge creation the ‘genius’ must convince others that what s/he found is true. Hence the persuasion strategies at conferences, in peer review, to funding bodies, etc.). As Latour put it once, it is not so difficult to accept that nature strikes back when you try to come up with new knowledge claims, it is the colleagues striking back … because they have to be sceptical.

Can you sketch a process in which the basics of knowledge creation are a-social?

I think the resistance may come from a confusion between ‘social’ and ‘contamination’, as in: cognition is pure, social influence leads to bias. Correct me if I am wrong. But this reading would make sense to me and explain the worries you have about the concept.

I am all in favour to exclude bias, partiality, motivated reasoning etc. from the process of the creation of scientific knowledge.

So open doors on this one, too 😉

Exploring sounds weak for a scientist armed with all these certainties. Don’t you want to explain and predict? Or are you in fact unhappy with the term ‘verify’?

No, I’m not at all unhappy with the term “verify”. But that’s not how experimental science in my area of physics works. The idea that a theory is put forward and experimentalists scramble to verify it is not how much of condensed matter physics/chemistry and nanoscience works (regardless of what sociologists tell us should happen!)

Do I want to explain my findings? Of course. But I do this on a body of exceptionally well-established knowledge. I don’t seek to verify Schrodinger’s equation every time I do an experiment, even though it’s that equation which underpins my measurements.

Reserving the term science to those areas where can have reproducible knowledge screens out pretty much of our knowledge base. You only can reproduce if the parameters remain constant, not a scenario that widely obtains.

Errmm. No. Sorry, but no. Reproducibility of measurements/experiments is absolutely key to the scientific method.

See, for example, this: http://www.nature.com/news/policy-nih-plans-to-enhance-reproducibility-1.14586

If reproducibility is not at the core of the scientific method then why would the NIH be concerned about enhancing it?

Regarding the resistance to the term ‘social influences’ just think about what the alternative mode of cognition would be. The individual mind making discoveries? And then somehow making these universal? Surely even in this heroic picture of knowledge creation the ‘genius’ must convince others that what s/he found is true.

No. The data , reproduced in independent experiments by different researchers, is what convinces others that what she found is true. See the comment from “And Then There’s Physics”.

And, yes, an individual mind/lone researcher can certainly make discoveries. The history of science is replete with examples. And the way the genius (note absence of “scare quotes”) convinces others is that her observations are reproduced in other experiments, or her theory predicts the results of measurements. If not, than the observations/theory die a death. Eventually.

Can you sketch a process in which the basics of knowledge creation are a-social?

Apologies for the self-promotion but I know the work of the nanoscience group here in Nottingham best! Please watch this: Sixty Symbols: Atomic Switch . At the time of doing that experiment we had no idea that it was going to work. And yet it did. And when it worked it happened at 4 am. With no-one else present.

In what sense is this not knowledge creation? Sure, at the point of discovery I hadn’t yet disseminated that information. The atoms were either going to flip or they weren’t. But because of the physics (well, physical chemistry), not the sociology, of the situation. In what sense was this discovery socially determined?

I think the resistance may come from a confusion between ‘social’ and ‘contamination’, as in: cognition is pure, social influence leads to bias. Correct me if I am wrong. But this reading would make sense to me and explain the worries you have about the concept.

Similarly, I could suggest that your “resistance” to the idea of there being objective physical laws comes from your misconception about the scientific process. Let’s avoid terms like “resistance” — they’re not helpful.

It’s not a question of cognition vs social influence in any case. It’s a question of there being objective physical/chemical/biological laws, which were there long before there were humans on the planet (and which caused the appearance of the planet and life in the first place), which scientists discover.

Philip, to reiterate, I am familiar with the textbook rendering of ‘the scientific method’. No need to repeat things that are uncontroversial. If it were that easy that all research hypotheses and disagreements can be decided by data, plain and simple, we would not have a history of science, and we would not witness scientific controversies.

Of course scientists have data. But they need to interpret them. And very often there is room for debate, both about the quality of data, and about their proper interpretation. What counts as level of confirmation/refutation? Who has the burden of proof? I hope you will agree with me that these are vital questions for every practicing scientist, at the cutting edge of their discipline.

I completely agree that scientists should design their research in a way which excludes or minimises bias. To ensure that there is no bias, but detachment /disinterestedness, rules need to be followed. Rules are social conventions. You are illustrating the point I am trying to make.

Data and their interpretation will be communicated to others, through stunning videos that convince people by watching (seeing is believing); through graphs and tables; but also through the fact that some eminent person has done the experiment, someone who is trustworthy. Of course in theory experiments could be replicated but it has been argued that they rarely are (there seems little incentive to do so).

What I am getting at is that all these efforts are social in nature, the atoms will not communicate. As you say, people need convincing:

And, yes, an individual mind/lone researcher can certainly make discoveries. The history of science is replete with examples. And the way the genius (…) convinces others is that her observations are reproduced in other experiments, or her theory predicts the results of measurements…

Language, communication, and persuasion (convincing others) seem to be central to doing science, to establishing a new discovery, theory, model, or fact.

I wonder what you would make of examples that stray beyond the textbook examples, such as climate science. Not sure if you would use “scare quotes” here (as in climate “science”).

There are no experiments available to verify certain claims in the debate, much is done through computer modelling. Which status would you accord to them? For a recent example see the exchange between two eminent researchers, as presented here:

http://judithcurry.com/2014/05/26/the-heart-of-the-climate-dynamics-debate/

Hi, Reiner.

If it were that easy that all research hypotheses and disagreements can be decided by data, plain and simple,

OK, here’s our first point of departure, already in the first paragraph of your response. The only way to decide between two opposing hypotheses is on the basis of the data. How else do you do it?

Of course scientists have data. But they need to interpret them. And very often there is room for debate, both about the quality of data, and about their proper interpretation.

Agree entirely. See this particularly relevant post from Neuroskeptic: http://blogs.discovermagazine.com/neuroskeptic/2014/01/04/reanalysis-science/

But if two scientists don’t agree on the interpretation of data — and Zarquon knows, I’ve certainly experienced more than my fair share of this of late! — then one way to resolve this is (a) do additional experiments where complementary data are obtained, and/or (b) show, through detailed quantitative and objective analysis, that some or all of the data can result from artefacts in the experimental measurements (as we have done for the “striped nanoparticle” saga).

I completely agree that scientists should design their research in a way which excludes or minimises bias. To ensure that there is no bias, but detachment /disinterestedness, rules need to be followed. Rules are social conventions. You are illustrating the point I am trying to make.

We’re starting to talk past each other here, Reiner. We agree that scientists are not free from social conventions — I have never suggested otherwise! Indeed, I quoted Ziman on this point last week in my first post on the “Circling…” conference .

But this is not the crux of our debate.

My point is, and always has been, that just because scientists are subject to social conventions does not mean that all scientific claims, theory, and data incorporate, or are biased by, social factors. There’s a huge difference between these two assertions.

…but also through the fact that some eminent person has done the experiment, someone who is trustworthy.

You know as well as – no, better than (!) – I do that this is the argument from authority fallacy. I quoted Feynman at the end of the conference: Science is the belief in the ignorance of experts . To rely on the eminence of a scientist as a measure of the validity of data is anti-scientific. Yes, it’s a social convention but it’s a convention that when science is carried out correctly is over-ridden.

Language, communication, and persuasion (convincing others) seem to be central to doing science, to establishing a new discovery, theory, model, or fact.

Temporarily, perhaps. Ultimately, nothing else but the data and the reproducibility of the measurements will win out. It’s not a question of persuasion (although I’ll entirely admit that, unfortunately, newsworthiness often trumps rigour in modern science). But this is when science goes wrong.

The remarkable thing is that when science is done right we surmount those social conventions and we produce data and theories which are truly universal. The beauty, elegance, and sheer ….honesty of this process is a key reason why I’m still a scientist (and it’s why I’m spending so much time debating this with you!).

I wonder what you would make of examples that stray beyond the textbook examples, such as climate science.

You keep using the term “textbook examples”. The furore over striped nanoparticles I’ve mentioned above is far from a textbook example – it incorporates all of the social elements we’ve been discussing (observer bias, arguments from authority, artefacts, differences in opinion re. data interpretation). But the issue will be resolved only by bypassing those social elements.

…and, no, I’m afraid that I won’t get drawn into a debate on climate change! At least not at the moment when I’ve got a stack of exam papers to mark. I’ve watched too many others disappear down the rabbit-hole following their posting of innocuous comments related to climate change.

Yes, that is wise. I made that mistake about 14 months ago and have lived to regret it ever since 🙂

I would, however, been quite interested in Reiner’s views as to whether or not the exchange between Lacis and Bengtsson – for example – is indicative of something more significant than two people simply expressing views about peer review. Similarly we have the climategate emails that many seem to use to infer broader issues with climate science. So far the best analysis I’ve seen is “I’ve read the emails. It’s obvious”. If these emails are indicative of something more significant than people simply saying things in private that they wouldn’t say in public, where’s the analysis? Surely we’re not judging societal/political impacts on scientific fundamentals by reading some emails that were not intended to be public, or reading exchanges between scientists on blogs?

I had the impression we were talking past each other, too. But just where, I wonder. Good to see we are in agreement on many points, perhaps the misunderstanding arose because you were holding up a normative idea of science (using *that* word again) where I took your assertions as describing science as it is. I am in favour of the normative ideal, but to make it work one has, on occasion, to overcome powerful social forces…

I understand your reluctance to comment on climate science (or climate “science”?), because of the marking pressure. If I remember correctly you did in fact make some critical comments in another context.

Philip, it’s also worth noting that scientists have written about ‘regulatory science’ (Alvin Weinberg called it ‘trans-science’) to describe the activity they were undertaking when called upon to provide “answers to questions which can be asked of science and yet which cannot be answered by science” (Weinberg 1972).

http://plato.seas.gwu.edu/emse4755-6755s13/wp-content/uploads/2013/01/Weinberg-Science-and-Trans-Science.pdf

I don’t think it’s helpful to say that regulatory science wilfully erodes independence and disinterestedness – the kinds of questions that it is addressing necessarily involves engaging with issues that are not ‘just’ scientific (e.g., in doing a toxicological study of potential hazard from a chemical substance, should one prefer a Type 2 error over Type 1; or how should the results of a single-dose/animal model study be weighed alongside a simulation of the impacts of chemical mixtures, etc). Sociologists investigating the science/policy interface have tried to show why controversies arise when this type of hybrid issue is forgotten.

Hi, Sujatha.

I’m not for one second suggesting that science is the be-all-and-end-all of policy-making and of regulatory decisions. My point is simply that we shouldn’t call something science when it clearly isn’t science. And one of the signatures of science is that it should be disinterestedness (i.e. the “D” in Merton’s CUDOS norms).

If what you’re doing isn’t disinterested — i.e. isn’t driven by the understanding that your methods and analyses should , to the very best of your ability, be neutral — then I would suggest that we don’t call it science.

Philip

Being a biological scientist, I have strong sympathy with Philip’s position. Personally I prefer the word “detached” over “disinterested”. I’m very interested in what I study and can’t imagine studying it if I weren’t–I don’t think I could in fact do that very well. But when it comes to inferring cause and effect relationships between things, or even just accurately quantifying or describing something, I make a conscious effort to be detached from the results and conclusions. In this sense I think Buddhists are right on the money–detachment requires practice, and so they practice it, every day. Scientists have to try to do the same. It’s a conscious effort, but it gets easier with practice. Many people are not really aware that they actually favor a certain outcome, and this completely distorts their objectivity, destroys it in fact.

Hi, Jim.

Thanks for the words of support. For me, there’s always been a clear distinction between “disinterested” and “uninterested”, with the former indeed having the meaning of “detached”. It was in this sense that Merton (and Ziman, among others) used the term.

However, it seems that the meaning of “disinterested” is evolving : http://motivatedgrammar.wordpress.com/2012/03/21/am-i-disinterested-or-uninterested-in-this-debate/

[Brigitte, you might be interested in the etymology here!]

Philip

Thanks for that link Philip, that was very useful and interesting. And I note also that you in fact defined what you mean by the term “disinterested” in the comment I replied to.

Just took a look at your blog, Jim. Good stuff!

I particularly liked this: http://ecologicallyoriented.wordpress.com/2014/05/01/the-daily-life-of-a-scientist-part-1-the-scientist-computer-interaction/

Philip

Thanks Philip, appreciated.

Oh yes I remember reading articles about this type of semantic drift which is quite inevitable in the invisible hand process that governs linguistic evolution! Nobody intends words to change meaning, but they just do because we use them in social interaction 😉 …At the moment disinterested and uninterested are converging in meaning. In this situation some speakers will desperately try to stick with and enforce what is perceived to be the ‘proper’ meaning, some will begin to avoid the word disinterested altogether and say something like impartial or indeed detached… so we’ll see how things go. Remember that ‘silly’ once meant ‘holy’ (for those German speakers of us – we still use ‘selig’ in that sense!) !

Reiner,

You said: I had the impression we were talking past each other, too. But just where, I wonder.

I think the nub of the matter is the following, from my previous comment:

Do you agree with this? Or do you consider all scientific and data to be unobjective, i.e. that the results we get, and the theories we derive, as scientists always depend on the sociology of the scientific ‘endeavour’?

Philip

Formulated in a more practical way, Reiner Grundman do you expect that within our scope of application, the Martians would have different law of gravity (sorry) or Pythagoras’ theorem?

…or, if you’ll excuse the hat tip to a certain Mr. Adams, would small blue furry creatures from Alpha Centauri determine the same physical laws as we would (see last couple of paragraphs of this )?

Drat. Link is busted.

I meant this: http://physicsfocus.org/philip-moriarty-circling-the-square/

This question is not meaningful as the Martians are not defined. An empiricist would say the test of the pudding is …

Ah. That answer is exceptionally illuminating, Reiner. Thank you.

The question that Victor posed is perfectly meaningful. In answering it by saying that the Martians are not defined you are indeed arguing that there are no objective physical laws. It does not matter a jot how the Martians are defined – they will experience the same laws of physics as we do. Just how does the Curiosity rover manage to work on the surface of Mars if the same physical laws are not in operation there?

As I said in my post over at physicsfocus this represents a e huge gulf between sociologists and natural scientists. It’s a major impasse…

I think the conference would have benefited from the dialogue between Philip and Reiner that appears in these comments.

I have posted my views, which are not a direct response, at

http://magic-maths-money.blogspot.co.uk/2014/05/scientific-facts-and-democratic-values.html

My aim is to explain why I think Philip’s position is problematic, without endorsing Reiner’s.

Thanks to both for organising the conference.

Tim

A question for Philip.

Is mathematics socially constructed? This is significant in the social construction of physics, since if physics is based on maths and maths is socially constructed, then physics must, in part, be socially constructed.

I like the case of the representation of the Golden Ratio

http://plus.maths.org/content/decoding-da-vinci

as an argument that maths IS socially constructed.

Who is cleverer? God in laying out the laws of nature for us humans to “discover” or humans in making sense of the world around us.

Hi, Tim.

I’ll respond over at your blog when I finish my exam marking.

For now….

1. On God: I’m not a fan. See this video .

2. On the golden ratio: I am a fan (sort of). See this . (Apologies — couldn’t resist!).

A lot of nonsense has indeed been spoken about the golden ratio. It’s not a good example to use re. the social underpinnings of mathematics because there is clearly a great deal of observer bias involved in seeing the golden ratio everywhere.

For me, mathematics indeed describes a world “out there”. It would be a position lacking in self-consistency to argue that physical laws are objective and not socially-/culturally-derived and then say that maths isn’t!

The best example, by far, to show that maths isn’t socially constructed is the Mandelbrot set. In what sense could this object, of effectively infinite complexity, be said to be socially constructed/derived? The “world” of the set is embedded in a remarkably simple (one line!) algorithm. It was waiting there to be discovered (and explored) on the complex plane.

In one of my comments to Reiner above I provided a link to a wonderful video of the Mandelbrot set. This is a better example: https://www.youtube.com/watch?v=G_GBwuYuOOs

Philip

Phil,

Something that caught my attention: you are not a fan of God but you believe mathematics describes a world “out there”. I do not believe in the “out there” (I interpret what you say as a Platonic reference) this means I do not think maths is discovered, it is invented. That great modern sage David Baddiel pointed out that because he is a definite atheist he is interested in religion, it means I can think about the mathematical aspects of Pascal’s Wager without worrying about the theological. My work on the ethics of finance stalled for 18 months because I did not want to take a virtue ethics line, since as far as I could see (empirical evidence) all virtue ethicists end up Catholics (including Anglicans) and I did not want to make that mistake. Putnam (a prominent mathematician) provided the escape route through pragmatism in Ethics without Ontology.

The Decoding da Vinci piece is not about the Golden Ratio, but the representation of numbers. Newton was able to derive his laws of motion because he had developed the calculus. Why was he able to do this? Because he saw a correspondence between writing functions as polynomial expansions and numbers as decimals, this mean he only had to work out how to differentiate x^n. Think a bit about how much of mathematics rests on the idea that functions are represented/not represented by series, would it have been invented (discovered if you prefer) if Stevin had not advocated decimal notation (in the context of commerce).

Typically we take “as given” how we write numbers, but it is not, it is culturally distinctive. For example the Chinese use(d) characters for numbers (though in a decimal system-I’ll come back to this) and if you try and negotiate a price with a Chinese market trader they won’t hold up ten fingers to represent 10 but cross their index fingers to represent the character for 10. In English we count eleven, twelve, thirteen, fourteen suggesting we did not think decimals were important but the abundant number 12 was important. The French have the split at 16/17, 16 being a power of 2 and so can be repeatedly halved.

My point is that the development of calculus was not inevitable like the “discovery” of America, it rested on a contingent social/cultural sequence. The importance of cultural relativity is an antidote to racism: if you do not accept the cultural contingency of science you leave the door open to the possibility that European science exploded in the seventeenth century because North West European protestants were “racially” superior to everyone else. There are many flavors of relativism, which I try and elucidate in my blog post, but only the pathological extreme would reject the objective truth of experimental physics, the problem is most of human existence takes place outside the lab. There is a subtle message to you in the aside in my blog post on the Irish Famine, be careful what you wish for in pleading for the objectivity of science; like virtue ethics and believing in something out there, it could lead you somewhere you don’t want to go.

Hi, Tim.

Thanks for your fascinating and thought-provoking response to my comment.

The question of whether maths is discovered or invented is, as you know better than me, a very polarised debate with, as I understand it, about a 50:50 split among philosophers as to which side they choose.

I think that there’s an obvious parallel here with the objectivity of scientific laws. Clearly, as a number of those posting comments in this thread have pointed out, the progress of science is influenced by sociological “mores”. But this does not mean that scientific measurements and theories are nothing more than social constructs.

Similarly, progress in mathematics will involve sociological influence — mathematicians, like scientists, can be exceptionally creative (with all the sociological ‘baggage’ this entails). Thus, there is clearly an element of invention in the “mix”.

But, to me, it’s just like a scientist or an engineer inventing an instrument to probe a facet of Nature. The mathematical tool sometimes needs to be invented to discover a particular aspect of mathematical “reality”.

…and, in parallel, Leibniz developed his particular version of calculus: different notation, but the same fundamental connection to the objective physical universe. This is a wonderful example of the “different paths to the same fundamental scientific laws” process described in many comments above (Ruth Dixon, And Then There’s Physics, chris…).

If I can “cross-reference” my responses to different commenters (just to make this thread even more difficult to follow!), Reiner said this.

This is a remarkable — and demonstrably incorrect — assertion. For example, at the heart of the debate between Newton and Leibniz about “precedence” in the invention of calculus — and, yes, I use “invention” here advisedly — was the fact that Newton didn’t publish his results in quite as timely a fashion as Leibniz. Does that mean that Newton’s version of calculus was not objective until it was published and accepted by scientists and mathematicians of the time?

Of course not, just as if I do an experiment and decide to not publish the results for twenty years, this doesn’t mean that those results are not objective facts.

Philip

P.S. There is still the matter of the Mandelbrot set to deal with. How could this mathematical object be said to be invented rather than discovered?

Hi Philip,

I thought you were going to provide a comment or answer to my suggestion where the potential misunderstanding was. You answer with another question… Well, I have to say this is too obscure 😉 to be answered precisely. I could answer Yes and No depending on the interpretation of some key words in your question.

Sorry, Reiner. I thought that I was simplifying things. I cannot see how what I’ve asked is particularly obscure but this is probably symptomatic of the differences in our worldviews.

Let me try to clarify by re-wording the question as a couple of different questions:

– Under what circumstances can the measurements made by scientists be considered to give objective results (i.e. results independent of the social setting in which they were obtained)?

– Under what circumstances can the theories developed by scientists be considered to be an objective explanation of the natural/physical world?

I really think that if we’re going to make progress we have to try to find a way to communicate on this core question of objectivity and reproducibility independent of the social environment. It’s at the root of our debate.

Sitting on the train I thought up this comment which will probably sound totally naive to sociologists (and scientists), but here goes. Could one say that scientists like to find out how the world works and, using their wily ways, not to mention the scientific method, they have actually found out an awful lot or, to put it otherwise, have established quite a solid and robust body of knowledge (that is not not say that there is absolute certainty or absolute truth and that there aren’t whole universes of not yet known things out there). They have also contributed to us being surrounded by things that (again, on the whole) work/are the case (including E = mc2; quantum stuff; but just look around you). Sociologists of science in turn want to find out, and rightly so, how scientists go about finding out how the world works and why they want to find out particular things about the world. They also want to find out which social or political factors may impinge on why scientists want to find certain things out, how they go about finding things out and what impact these findings may have on the world. This does however not mean, I believe, that the things scientists have found out about the world, i.e. that the knowledge we have established and used to build the world we live in (for good or for ill) is just tumble weed brought about and tossed about by social forces. And as for those small furry creatures from Alpha Centauri, I wish they (or indeed Douglas Adams) could contribute to this comment stream! And now I need a glass of wine 😉

I don’t think your comment sounds at all naive, Brigitte.

I think that this cuts to the heart of the matter and don’t see how the issue could be expressed any more clearly than this.

You are confounding two issues in the question. The first issue is expressed in the first part of the question, ‘Under what circumstances can the theories developed by scientists be considered to be an objective explanation of the natural/physical world?’ This question will be answered by communities of practitioners, according to their standards of quality. If you are after transcendental principles you end up in the philosophy of science debate known as ‘essentialism’. What I am trying to say is there are many attempts at universal definitions, and many voices who say these attempts are elusive.

The second issue is in the parenthesis, suggesting that objective results are ‘results independent of the social setting in which they were obtained’. I am sorry to repeat myself here, but I do not think that there is any other way than a social process of communication and interaction which can lead to results which are accepted as objective. They need to be accepted by a community of practitioners to became accepted, objective facts. Otherwise they remain the belief of a single researcher.

I don;t think it is. Objectivity and reproducibility is something all researchers aim for, but cannot achieve, because they cannot exclude interfering factors, or the object is in flux, or the object reacts to the intervention of the researcher, or the costs would be too high, or because ethical guidelines make social research near impossible (had to get this one off my chest!).

In these cases researchers apply ‘less rigorous’ methods, using small n studies, doing qualitative, interpretive, conceptual, or historical work. Some (emphasis on some) of these researchers may come to see their approach as much better than the striving towards objectivity (several ‘standpoint epistemologies’ come to mind) but I digress. However, they would probably (and happily) accept the label of being ‘non-science’.

Can one say then that all scientists, natural or social, strive to achieve findings that are objective and reproducible? They do this in communal, collaborative, antagonistic, communicative, social ways. However, the findings that are thus produced and which scientists hope will be judged as being as objective and reproducible as possible, are themselves not social in nature. E.g. the speed of light (or Grimm’s law [trying to inject some variety]) is itself not a ‘social phenomenon’; it is (as far as we know) an objective ‘fact’ independent of the social setting in which scientists work. Findings etc. can of course re-enter the social sphere by being discussed, disseminated, dismissed, disputed, used, forgotten, hyped etc.

My formulation would be that the social norms of the scientific community (“communal, collaborative, antagonistic, communicative, social ways”) are what makes the science neutral in the long run. Scientists are humans and may be biased, but they are most efficient if they “strive to achieve findings that are objective and reproducible”.

Einstein (I hope his name does not remind people of gravity) did not like God playing dice and tried to refute Quantum Mechanics all his life. In doing so he helped understanding QM a lot better, because he played by the rules of the scientific community. (He might have been more productive, had he been less biased.) His efforts did not make science biased.

Maybe gravity is better than Grimm’s law. I had to look that one up, but I learned something.

Yes, you are right gravity trumps Grimm’s law, which is a ‘regularity’ rather than a law… and historical and retrospective rather than predictive etc etc… Will have to think harder!

An example of such a social process might be the eventual acceptance of Peter Mitchell’s chemiosmotic theory (concerning how mitochondria work in the living cell). Each ‘side’ approached the data with their own hypothesis: the energy was either stored as an electrochemical gradient across the inner mitochondrial membrane, or as an unstable chemical intermediate. The former hypothesis prevailed, and Mitchell was awarded the Nobel prize for chemistry in 1978.

As Mitchell said in his Nobel prize lecture, acceptance of his theory came after ‘a time of great personal, as well as scientific, trauma for many of us. … At the time of the most intensive testing of the chemiosmotic hypothesis, in the 1960s and early 1970s, it was not in the power of any of us to predict the outcome. The aspect of the present position of consensus that I find most remarkable and admirable, is the altruism and generosity with which former opponents of the chemiosmotic hypothesis have not only come to accept it, but have actively promoted it to the status of a theory.’

http://www.nobelprize.org/nobel_prizes/chemistry/laureates/1978/mitchell-lecture.pdf