December 8, 2014, by Christofas Stergianos

Image recognition on a UAV

Image recognition on a UAV

As part of the Institute for Aerospace Technology’s INNOVATE project, the project team was tasked with the design and build of two fully autonomous UAVs (Unmanned Aerial Vehicles, also known as Unmanned Aerial Systems). The UAVs are described and demonstrated in the March 2014 INNOVATE blog. Researcher Christofas Stergianos explains how one of the most demanding aspects of this process centred around image recognition…

Although it is not something that immediately draws attention in the same way as carbon fibre propellers or the flashing electronics, there was a lot of work done to ensure that the UAVs were able to find their target (a letter placed on the ground). In fact, most of the work is hidden inside a small SD card.

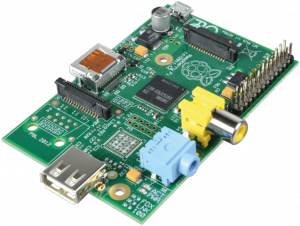

Hardware Side – Raspberry Pi

Figure 1 – Raspberry Pi

In order to be able to run image recognition software, we needed a processing unit that would be powerful, light and open source in order to run an image processing algorithm on-board the UAV. Since putting a laptop in a UAV is not possible due to weight restrictions we decided to choose the next best thing. We used a Raspberry Pi – a small, light and open source computer that is usually used for the teaching of basic computer science.

Software Side – Image recognition

For the image recognition part, we used the open source OpenCV libraries – more precisely the Haar Feature-based Cascade Classifier for Object Detection. A classifier uses an object’s characteristics to identify which class (or group) it belongs to. A cascade classifier uses different levels of classifiers. If the lower level classifier fails to find the object but is not sure that the object doesn’t exist, the next level classifier is called which has a set of different rules. This process continues until the program is sure that either the object is found by one classifier or a classifier is sure that it is not there, making the overall process faster and more exact. The work with a cascade classifier includes two major stages: training and detection. In the training stage, the algorithm is provided with a large number of positive and negative image samples. A positive image is when the target object appears on the picture and the classifier uses this image as future reference when comparing with other images. A negative image is when the target object is missing and the classifier takes these images into consideration in order to avoid finding the object without the object being there. Although we used a quite powerful (high specification) computer for the training, this process needed at least 4 hours to complete.

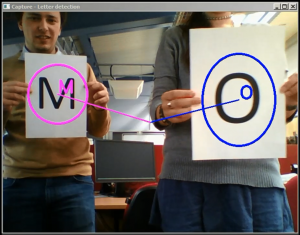

We used the software of a face recognition program that was made specifically to run on a Raspberry Pi and after changing some parameters, some code and with a lot of testing we managed to be able to train the classifier with any letter of our choice.

Figure 2- Recognising letters using the Haar Feature-based Cascade Classifier

In order for the UAV to recognise a letter in a field from a long distance we had to make some experiments and see what configuration of positive and negative images would be the most effective for the classifier training. We ended up using 1109 negative and 200 positive images which is the recommended amount of pictures for processes like this one in order to achieve the best results. The negative images were taken from a video that was recorded by the on board camera during one of the first flights of the UAV. In these images we made sure that there was a variety of negative samples that varied from simple grass to buildings, lakes or the sky in different angles that the UAV had recorded when flying.

Figure 3 – Negative samples

Figure 4 – Positive sample

For the positive images we tried a number of things to make sure we have the best sample. First we took photos with the UAV of a large letter that we had designed in the grass. We also used photos that we took from a smaller letter on the grass with a normal camera. However we found out that the best input for the classifier was an image created by image processing software. We used real photos of the background and added a large letter in the middle of it. Distinct colours and sharp colour differences with strict lines made the classifier more accurate.

With the use of image processing software we made 200 images for the positive object that included a variety of different angles and lightening of the original photo. After training the classifier and testing it with different combinations of positive and negative images we ended up with the best configuration of the parameters that provided the most accurate recognition of the given letter.

Results

In autonomous mode – which is when the UAV is operating autonomously without any external input (remote control) – the UAV is required to identify the targets (numbers or letters), adjust course and position itself to drop the payload (in the case of the fixed-wing aircraft) or land to deliver the payload (in the case of the rotary-wing UAV).

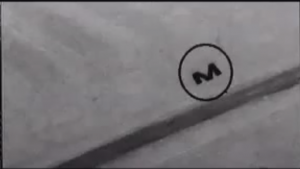

After testing in real conditions, the UAVs were able to recognise the letter lying on the ground. Although there were some false positives in some cases, we were able to ignore them since the majority of them appeared on one frame only. Below is a picture (Figure 5) that was captured from the video feedback of the UAV (watch the full-length video here). It shows the image recognition software in action! Note that it has no problem to identify the letter, although the camera is tilted and the picture is taken from an angle rather than vertically. Black and white imagery, as opposed to full colour, was used in order to speed up the image recognition process since the raspberry Pi’s CPU was used to the limit of its computational capabilities (it’s not as powerful/fast as a desktop PC after all!). Furthermore, the image colours were inverted as the image recognition process proved to work better in finding black patterns with sharp edges.

Figure 5 – Video feedback from UAV

Although it took a lot of time to perfect the procedure, after numerous test flights seeing the payload being delivered successfully makes us believe that it was completely worth it!

No comments yet, fill out a comment to be the first

Leave a Reply