January 21, 2014, by Tara de Cozar

Human/agent interactions – disaster planning and response

Some interesting collaborative research taking place in the Mixed Reality Lab over in the School of Computer Science. Researchers are working on on a national project called ORCHID, which looks at what it calls human/agent interaction. Basically, how people and computers interact to respond to specific situations.

Joel Fischer, one of the ORCHID team at Nottingham, has made a video about a particular element of the project — disaster response. Essentially, this looks at how we can use technology to help first responders make better decisions, reduce the potential for injury and save more lives.

By using past disaster info from across the world, the team create models for different scenarios, and test the applications they’ve developed. They’ve even taken part in simulated disasters with Hampshire County Council to learn about professional disaster response. They are also currently exploring ways to work with Rescue Global, a London-based charity that provides advanced search, rescue and command support in the aftermath of disasters.

In disaster situations, there’s a general lack of information. Any one person involved will have varying levels of understanding of what has caused the event, the extent of the disaster, who is involved and how many people there are in the surrounding area. By coordinating and analysing information collected from currently technology, such as smartphones and social media, the team want to develop new tools to best analyse the situation. Then the most effective response can be deployed.

So by using, say, traffic information and images gathered through social media from the geographic location of a disaster, response planners can amend their evacuation plans and routes.

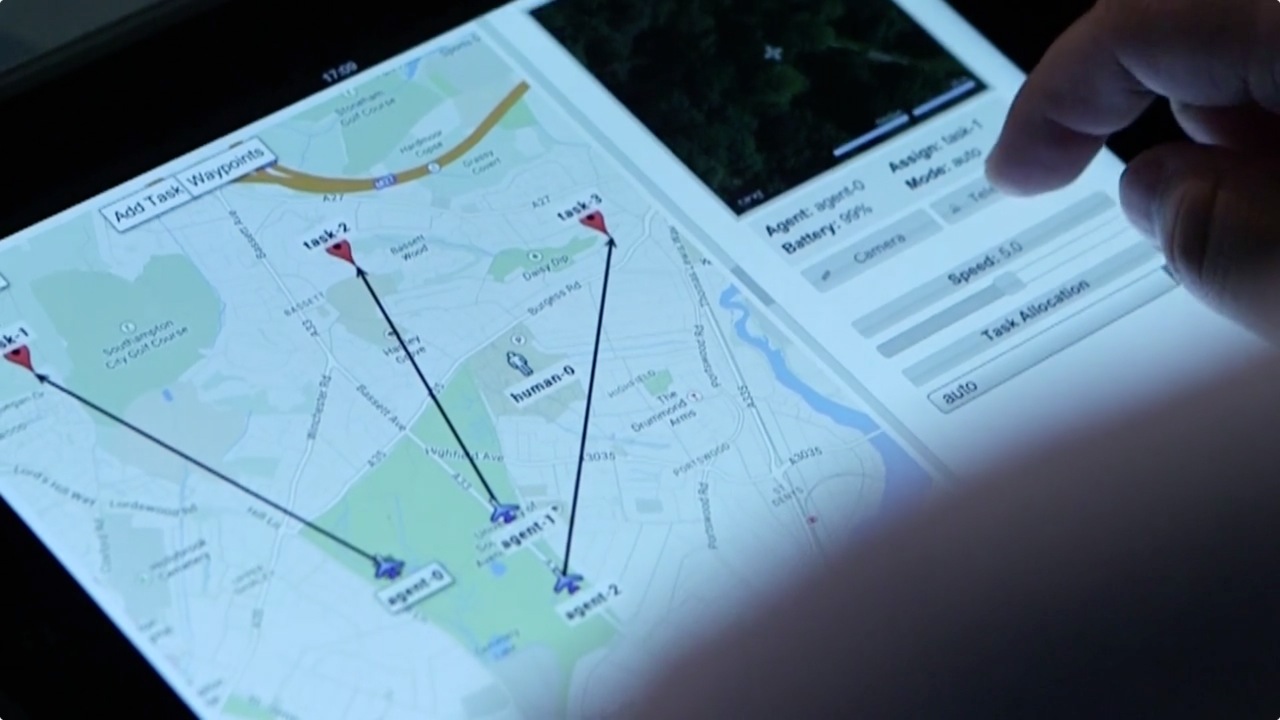

One way to add to the information gathered is to use unmanned ariel vehicles — or drones — to collect information. One strand of the ORCHID research is looking at how applications can guide the initial deployment of these drones, working out the points at which human decisions are needed.

The Nottingham team have been involved in another strand — creating a game in which the application assesses the scenario and suggests a plan, teams and actions.

“The goal of the game probe is to understand how teams of people supported by a planning agent, situation awareness and communication tools, coordinate a time-critical rescue mission under cognitive and physical stress. This furthers our understanding of how agent-based technologies that support planning in these situations should be designed,” said Joel. Which is comp sci researcher speak for ‘making things better with the right technology, I think.

You can watch Joel’s video here, and find out more about ORCHID at the project website. ORCHID has also been covered in the press recently, on the New Scientist website and at SciDev.

No comments yet, fill out a comment to be the first

Leave a Reply